AI Agents Fundamentals: ReAct, Tool Use, and Multi-Agent Systems

What’s a Chatbot vs. a Real AI Agent?

When you chat with ChatGPT, you’re talking to a chatbot: you ask, it answers. It’s a one-directional interaction where the model works within its context window, doesn’t reach into the outside world, and doesn’t perform autonomous actions.

An AI agent is fundamentally different. An agent:

- Receives goals, not just questions

- Reasons about the task

- Uses tools: searches the web, queries databases, calls APIs, executes code

- Iterates: evaluates its own results and retries when needed

- Makes decisions: determines what steps to take and in what order

A simple analogy: a chatbot is a customer service rep who only answers from memory. An agent is a junior developer who gets a task, researches, experiments, fixes mistakes, and delivers the result.

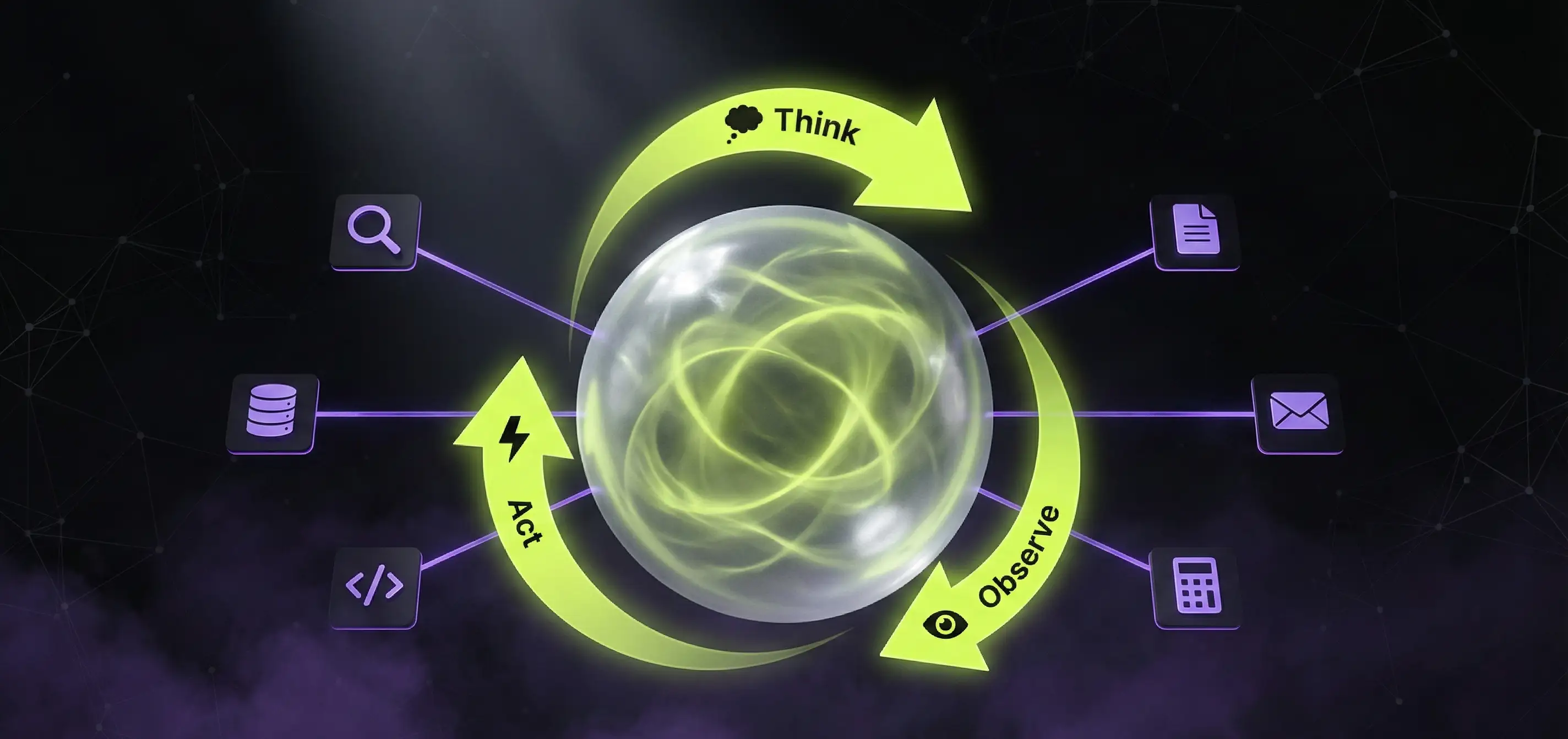

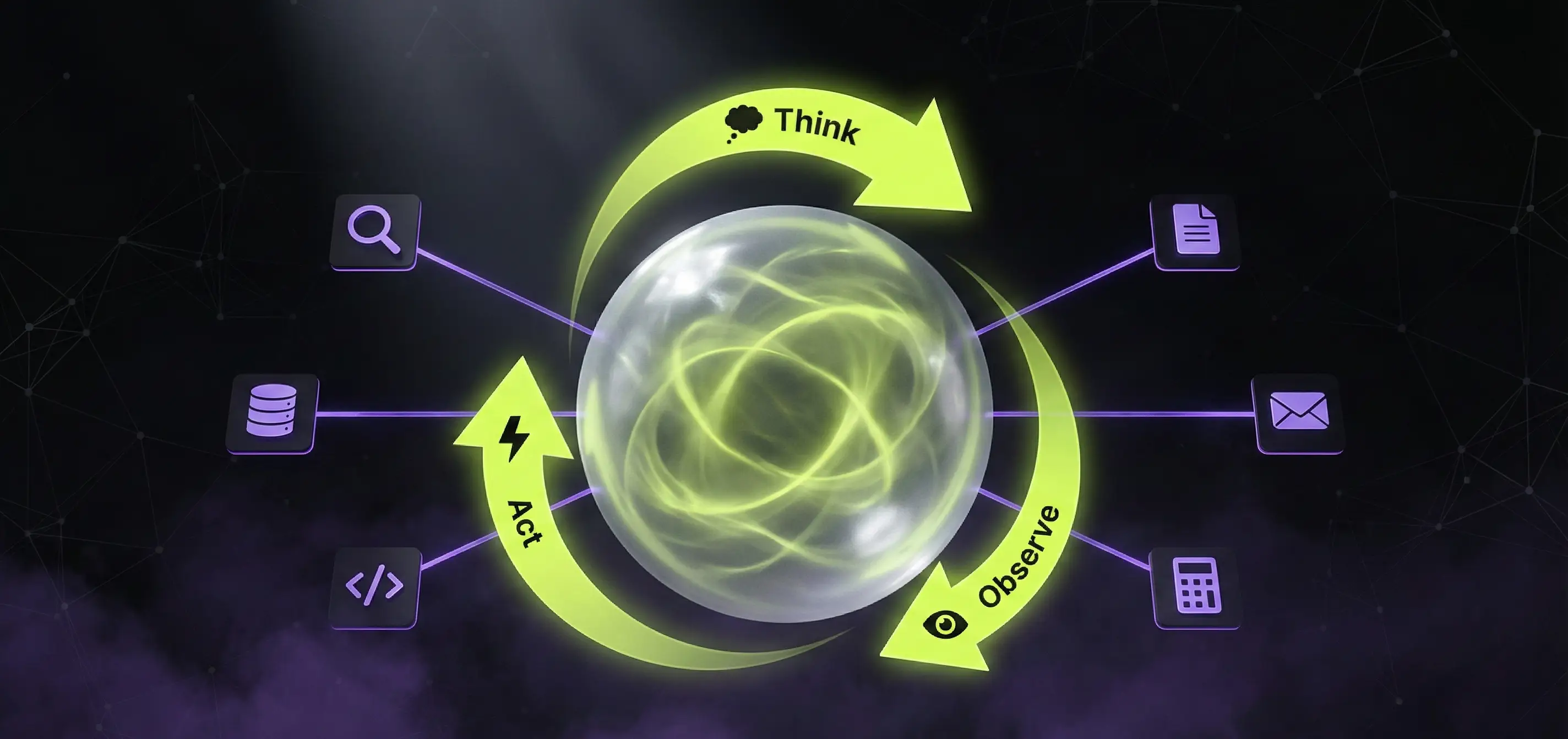

The ReAct Pattern: Reasoning + Acting

The foundation of AI agent operation is the ReAct (Reason + Act) pattern, published by Yao et al. in 2022. The idea is simple but powerful: instead of the LLM trying to solve everything in a single response, it thinks and acts in a cycle.

The ReAct Cycle

1. THOUGHT

"The user asks what Tesla's stock price was yesterday.

I don't know this off the top of my head - I need to search."

2. ACTION

→ search_web("Tesla stock price yesterday")

3. OBSERVATION

"Tesla's closing price was $248.50."

4. THOUGHT

"I have the data. Now I can answer the question."

5. FINAL ANSWER

"Tesla's closing price yesterday was $248.50."The key insight is that the LLM explicitly reasons before each step, and the result of that reasoning determines the next action. This approach:

- Reduces hallucination: instead of making up an answer, the agent searches

- Increases reliability: reasoning steps are auditable

- Enables complex tasks: breaks multi-step problems into sub-tasks

Tool Use: The Agent’s Hands

An agent on its own can only think. Tools give it hands to interact with the outside world. Tool use is one of the most important capabilities of modern LLMs - Claude Opus 4.6, GPT-5.2, and Gemini 3 Pro all support it natively.

How Tool Use Works

When you use an LLM with tool use, here’s what happens:

- Tool definition: You specify the available tools (name, description, parameters)

- LLM decision: The model decides which tool to call and with what parameters based on context

- Execution: Your system executes the tool call

- Result return: You pass the tool’s output back to the LLM

- Next step: The LLM decides based on the result - call another tool, or provide the final answer

# Example tool definition (Claude API)

tools = [

{

"name": "search_database",

"description": "Search the company knowledge base",

"input_schema": {

"type": "object",

"properties": {

"query": {"type": "string", "description": "Search query"},

"limit": {"type": "integer", "description": "Max results"}

},

"required": ["query"]

}

},

{

"name": "send_email",

"description": "Send an email to the specified address",

"input_schema": {

"type": "object",

"properties": {

"to": {"type": "string"},

"subject": {"type": "string"},

"body": {"type": "string"}

},

"required": ["to", "subject", "body"]

}

}

]Common Tool Categories

- Web search: Google/Bing API, or custom search index

- Database queries: SQL execution, vector search

- Code execution: Python interpreter, sandboxed JavaScript

- File operations: Reading, writing, filesystem navigation

- API calls: Any REST/GraphQL endpoint

- Calculator: Precise math operations (LLMs are notoriously bad at arithmetic)

Agent Memory: Short-term and Long-term

A serious agent needs memory. The LLM’s context window is limited (even if it’s 200K tokens), and previous conversations are lost without persistence.

Short-term Memory (Working Memory)

Information held in the context window: the current task, steps taken so far, tool use results. This is the “working memory” available during a single run.

Implementation: A simple list of previous messages (conversation history) passed with every LLM call.

Long-term Memory

Previous interactions, lessons learned, user preferences - information that persists across runs.

Implementation: A vector database (e.g., ChromaDB, Pinecone) where the agent saves information it deems important, retrieving relevant context on subsequent runs.

Episodic Memory

Specific past situations and their solutions: “Last time I encountered this type of task, this approach worked.” This is the least mature but actively researched area.

AI AGENT MEMORY

Short-term (context window)

· Current task

· Previous steps

· Tool use results

Long-term (vector DB)

· Past interactions

· User preferences

· Learned patterns

Episodic (structured)

· Situation → solution pairs

· Successful strategies

· Failed approachesPlanning Agents: Task Decomposition

A simple ReAct agent thinks step by step. A planning agent first plans the entire approach, then executes the plan.

The ReWOO Pattern (Reasoning Without Observation)

Unlike ReAct, ReWOO plans all steps upfront before executing anything. This is more efficient because fewer LLM calls are needed (one planning step + execution vs. step-by-step reasoning).

1. PLAN

"For this task I need:

- Step 1: Search the knowledge base

- Step 2: Query the CRM based on results

- Step 3: Generate a summary"

2. EXECUTE

→ Step 1: search_knowledge_base("customer complaint")

→ Step 2: query_crm(customer_id)

→ Step 3: generate_summary(results)

3. FINAL ANSWERMulti-Agent Systems: Collaborative Agents

The most exciting development in 2026 is the rise of multi-agent systems. The idea: instead of trying to teach a single agent everything, you create specialized agents that collaborate.

Typical Multi-Agent Architecture

Orchestrator Agent

(assigns tasks, collects results)

╱ │ ╲

Researcher Agent Coder Agent Reviewer Agent- Orchestrator: Receives the task, breaks it into subtasks, assigns them to specialized agents

- Researcher agent: Web search, document analysis, data collection

- Coder agent: Code writing, refactoring, testing

- Reviewer agent: Code review, quality assurance, security checks

Communication Patterns

- Hierarchical: The orchestrator directs, agents report back

- Peer-to-peer: Agents communicate with each other (e.g., coder asks the researcher)

- Blackboard: Shared memory where all agents read and write

Agent Frameworks in 2026

LangGraph

The agent orchestration framework from the LangChain team. Graph-based architecture where nodes are agent steps and edges define decision logic. Now a mature, production-grade solution widely used in 2026.

Strengths: State management, cyclical graphs, human approval checkpoints, persistent state Best for: Complex, multi-step agents that need to manage state

OpenAI Agents SDK

OpenAI’s production-ready agent framework, the successor to the experimental Swarm project. The Agents SDK builds on the Responses API and is open-source (Python and TypeScript). It provides built-in tool use, handoffs between agents, guardrails, and tracing - and is provider-agnostic, meaning it works with non-OpenAI models too.

Strengths: Simple API, built-in tracing, AgentKit for higher-level orchestration Best for: Teams in the OpenAI ecosystem who want to build production agents quickly

CrewAI

Role-based multi-agent framework. Each agent has a “role” (researcher, writer, reviewer), and the “crew” is the collaborating team.

Strengths: Rapid prototyping, intuitive API, 35K+ GitHub stars, 1.3M+ monthly PyPI downloads Best for: Team-based automation where tasks are clearly divisible

AutoGen (Microsoft)

Conversation-based multi-agent framework where agents collaborate through dialogue.

Strengths: Flexible, human-in-the-loop support, research-oriented, 48K+ GitHub stars Best for: Open-ended problem solving, iterative workflows with human involvement

Claude Tool Use + MCP (Anthropic)

Not a framework, but a native API capability. Claude models (Opus 4.6, Sonnet 5) can directly use tools - you define tools, and the model decides when and how to call them. The Model Context Protocol (MCP) is Anthropic’s open standard for connecting AI models to external tools and data sources - adopted by OpenAI, Google, and Microsoft in 2025.

Strengths: Simple integration, strong reasoning, safe by design, MCP ecosystem Best for: Custom agent applications where you want full architectural control

Real-World Applications

Code Generation Agents

Modern developer tools have real agents working under the hood. Claude Code is a terminal-based agent that works directly with your codebase: it reads and writes files, executes commands, manages git, and performs complex refactoring autonomously. Cursor IDE works similarly as an agent: it understands your entire project context and performs multi-step code modifications. These tools are living examples of the ReAct pattern:

- Understand codebase context (via MCP and tool use)

- Read and write files

- Run tests and fix errors (iteration)

- Perform multi-step refactoring (planning)

Research Agents

Automated research: the agent receives a topic, searches the web, collects relevant sources, extracts key information, and produces a structured report. A good example: Perplexity AI is essentially a research agent with a web interface.

Customer Service Agents

Not a chatbot, but an agent: it has access to the CRM, knowledge base, and order management system. It understands context, independently searches for solutions, and when it can’t help, escalates to a human - with the full context included.

Safety and Guardrails

AI agents aren’t toys. They raise serious security concerns:

Prompt Injection

If an agent reads from external sources (web, email, documents), malicious content can manipulate its behavior. For example: a webpage contains hidden instructions that trick the agent into forwarding sensitive data.

Defense: Input sanitization, sandboxing, least privilege, output validation.

Runaway Agents

An agent can enter infinite loops or perform unexpected actions. If the agent has write permissions (sending emails, modifying databases), this is a real risk.

Defense: Iteration limits, cost caps, human approval for critical steps, audit logging.

Guardrail Strategies

- Token limits: Set maximum cost per run

- Tool whitelist: Only approved tools are accessible

- Output filtering: Check responses for sensitive content

- Human-in-the-loop: Require human approval for critical decisions

What’s Next?

In 2026, AI agents have moved beyond the experimental phase, and the pace of development is exponential:

- Better reasoning: Claude Opus 4.6 and GPT-5.2 already demonstrate excellent multi-step reasoning capabilities

- Computer use: Anthropic’s computer use capability already enables agents to operate graphical interfaces (browsers, desktop apps)

- MCP ecosystem: The Model Context Protocol has become an open standard adopted by OpenAI, Google, and Microsoft - unified tool use across all AI systems

- Autonomous development: Claude Code and similar tools are already building complete applications independently

- Regulation: The EU AI Act and other regulations are creating frameworks for agent use

AI agents aren’t the future - they’re the present. The question isn’t whether to use them, but how to use them safely and effectively.

If you’re planning an AI agent-based solution for your business, the AppForge team can help with architecture design, framework selection, and secure implementation.

Need an AI solution?

Automate your workflows and gain a competitive edge with our artificial intelligence solutions.

Related Articles

You might also be interested in these articles

Artificial Intelligence for Business 2026 – Complete Corporate Guide

Artificial intelligence for business in 2026: how to integrate AI, what it costs, what it returns. AI agents, chatbots, automation, EU AI Act, measurable business ROI.

AI Chatbot vs n8n vs Custom AI Agent 2026 – When to Use What?

AI chatbot, n8n workflow, or custom AI agent - which one fits your business? A practical 2026 comparison with pricing, capabilities, real-world examples and decision matrix.

AI Development Costs in 2026 – How Much Does an AI Solution Really Cost?

A comprehensive guide to artificial intelligence development pricing: chatbots, RAG systems, custom models, and process automation costs with realistic budgets and ROI examples.