RAG Systems: How to Build an Intelligent Enterprise Knowledge Base

Why an LLM Alone Isn’t Enough

LLMs (GPT-5.2, Claude Sonnet 5, Gemini 3 Pro) possess vast knowledge - but they have two fundamental limitations:

- Knowledge cutoff: The model only “knows” up to its training data. Anything that happened after that is invisible.

- Hallucination: When it doesn’t know the answer, it often makes one up - confidently and convincingly, but falsely.

In enterprise environments, this is catastrophic. If your AI chatbot gives a customer false information or references outdated internal documents, that’s a business risk.

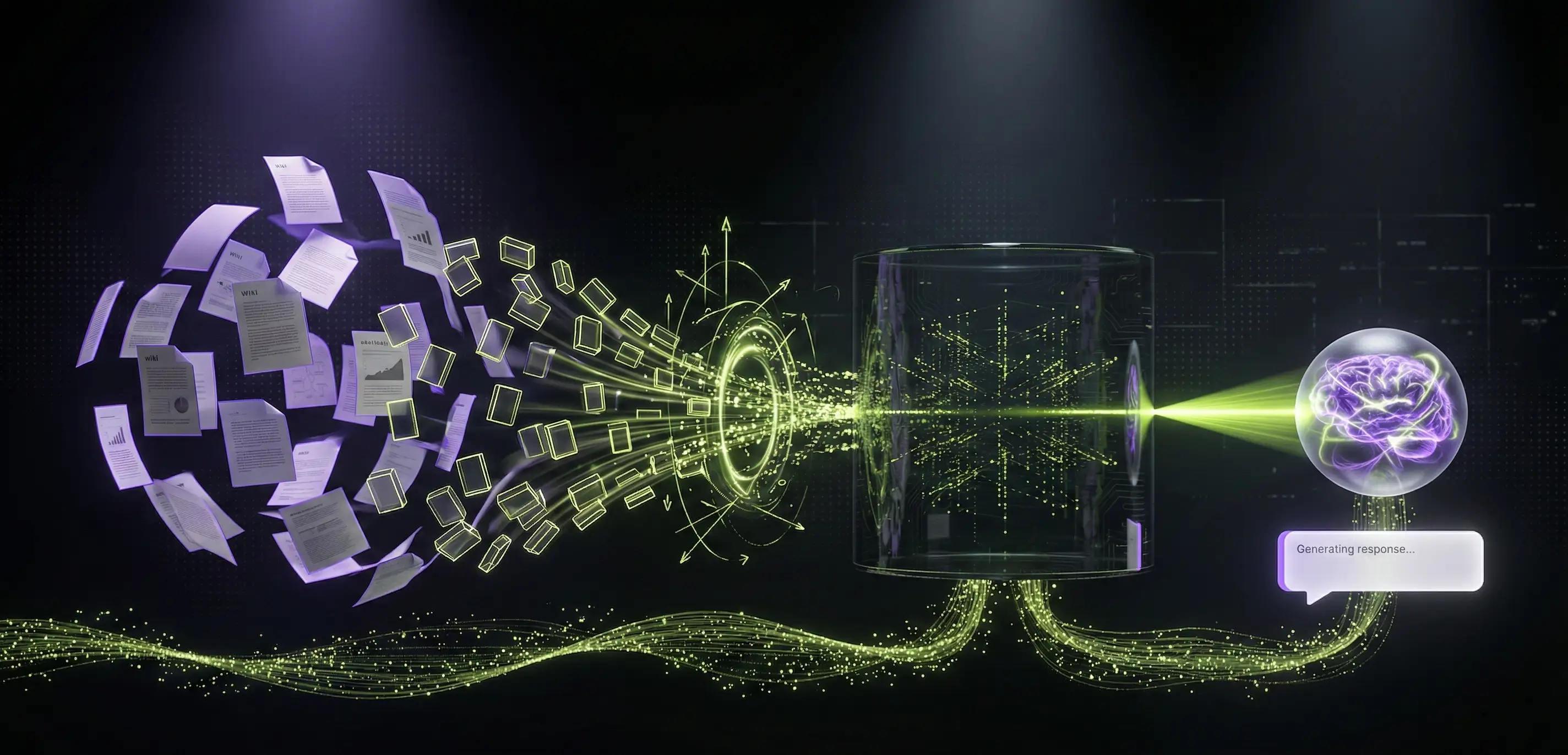

RAG (Retrieval Augmented Generation) solves exactly this problem: instead of the LLM answering from its “head,” it first retrieves relevant documents, then generates a response based on them. It doesn’t invent - it cites.

The RAG Pipeline Step by Step

A RAG system consists of two main phases: indexing (one-time) and querying (on every question).

Phase 1: Indexing

Documents → Chunking → Embedding → Vector DatabaseDocument Ingestion

The first step: gather the documents that form the foundation of your knowledge base. These can be:

- PDFs (policies, contracts, documentation)

- Web pages (help center, FAQ)

- Confluence/Notion pages

- Email archives

- Database records

Each source requires a different loader. Both LangChain and LlamaIndex offer plenty of built-in loaders.

Chunking

The LLM’s context window is limited, and retrieval accuracy degrades dramatically when storing massive text blocks. The solution: split documents into smaller pieces (chunks).

Chunking strategy is critically important - research shows that chunk size and overlap have at least as much impact on retrieval quality as the embedding model itself.

Main strategies:

| Strategy | Description | When to use |

|---|---|---|

| Fixed-size | 512 tokens, 10-20% overlap | General purpose, good starting point |

| Semantic | Groups sentences by similarity | Mixed-content documents |

| Recursive | Splits at chapter → paragraph → sentence level | Structured documents |

| Agentic | LLM decides split points | Complex, varied documents |

| Late chunking | Full document embedding first, then split | Context-sensitive content |

Practical recommendation: Start with 512-token fixed-size chunks with 10-20% overlap. This works well for most use cases. If quality is unsatisfactory, try semantic chunking.

Embedding Generation

Each chunk is converted into a vector by an embedding model - a dense numerical representation that captures the text’s meaning. Vectors for semantically similar text end up close together in vector space.

Popular embedding models (2026):

- OpenAI text-embedding-3-large: 3072 dimensions, excellent quality, paid API

- OpenAI text-embedding-3-small: 1536 dimensions, good value for money

- Cohere Embed v4: Multimodal (text + image), 128K context, 100+ languages

- Voyage AI voyage-3-large: SOTA performance across 100 datasets, Matryoshka dimensions (256-2048), quantization support

- Open-source:

nomic-embed-text-v2-moe(MoE architecture, multilingual),BGE-M3(dense + sparse + multi-vector retrieval, 100+ languages)

Important: The embedding model choice is effectively permanent - if you switch later, you need to re-embed all documents.

Vector Database

Embeddings are stored in a vector database that enables fast similarity search (cosine similarity, dot product, euclidean distance).

Vector database comparison (2026):

| Database | Type | Strength | When to choose |

|---|---|---|---|

| Pinecone | Managed SaaS | Scaling, simplicity | When you don’t want to manage infrastructure |

| Qdrant | Open-source | Performance, filtering | When speed and self-hosting matter |

| Weaviate | Open-source | Hybrid search, modular | When keyword + vector search is needed |

| ChromaDB | Open-source | Simplicity, developer-friendly | Prototyping, small-medium scale |

| Milvus | Open-source | Enterprise scale, 35K+ GitHub stars | Large datasets, enterprise |

| pgvector | PostgreSQL ext. | Existing PG infrastructure | When you already have PostgreSQL |

Benchmark data (2026): Pinecone shows ~50,000 insertions/s and ~5,000 queries/s, Qdrant performs similarly (~45,000 / ~4,500), while ChromaDB excels in developer convenience but is slower at scale (~25,000 / ~2,000).

Phase 2: Querying

Question → Embedding → Vector Search → Top-K Documents → LLM Response Generation- Embed the user’s question (using the same model as indexing)

- Vector search: find the most similar chunks (typically top 3-10)

- Insert the retrieved chunks as context into the LLM prompt

- The LLM generates a response based on the context

# Simple RAG query (pseudocode)

question = "What is our return policy?"

# 1. Question embedding

question_embedding = embed(question)

# 2. Vector search

relevant_chunks = vector_db.search(question_embedding, top_k=5)

# 3. Prompt construction

prompt = f"""Answer the question based on the following documents.

If the documents don't contain the answer, say you don't know.

Documents:

{relevant_chunks}

Question: {question}"""

# 4. LLM response generation

answer = llm.generate(prompt)Hybrid Search: Vector + Keyword

Pure vector search isn’t always enough. When a user searches for an exact term (e.g., “GDPR Article 17”), semantic search may not find it - because vector search optimizes for meaning, not exact word matching.

Hybrid search combines:

- Vector search: Semantic similarity (based on meaning)

- Keyword search (BM25): Exact word matching (based on terms)

The two result sets are combined using reciprocal rank fusion (RRF) or weighted averaging. Weaviate and Qdrant natively support hybrid search.

Reranking: The Second Filter

Vector search is fast but not always precise. Reranking is a second, more accurate model that re-scores retrieved documents in light of the question.

Popular reranker models:

- Cohere Rerank v3.5: SaaS, simple API, excellent quality, reasoning capabilities

- BGE Reranker v2: Open-source, good value

- Cross-encoder models: Slower but more accurate than bi-encoder embeddings

Reranking typically yields a 20-30% improvement in answer relevancy - small investment, big impact.

Advanced RAG Patterns

Multi-step RAG

The user’s question isn’t always clear. Multi-step RAG first clarifies the question (query rewriting, query expansion), then searches in multiple rounds.

Original question: "What's happening with the project?"

→ Rewritten query 1: "What is the current status of the Alpha project?"

→ Rewritten query 2: "What are the open tasks for the Alpha project?"

→ Unified answer based on both searchesSelf-RAG

The LLM evaluates its own response: it checks whether the retrieved documents are actually relevant and whether the answer faithfully reflects the sources. If not, it searches again or self-corrects.

Agentic RAG

The agent autonomously decides on search strategy: which sources to search, what filters to apply, whether multi-round search is needed. This is the combination of the ReAct pattern and RAG.

Graph RAG

Models relationships between documents (knowledge graph). Particularly useful when entity connections matter - e.g., for questions like “Who works on the Alpha project?” where the answer is scattered across multiple documents.

Evaluation: How Do You Know It Works?

Evaluating a RAG system isn’t trivial. The key metrics:

Faithfulness

Does the generated answer match the source documents? Is the LLM hallucinating information?

Answer Relevancy

Does the response actually answer the question? Does it stay on topic?

Context Precision

Are the retrieved documents actually relevant to the question?

Context Recall

Did the system find all relevant documents?

Evaluation frameworks: RAGAS and LangSmith provide automated evaluation on these metrics.

Practical Example: Enterprise Knowledge Base

Let’s say you’re building a RAG-powered internal knowledge base for a 200-person company. Here’s what the project looks like:

1. Data Sources

- 500+ internal documents (PDF, Word)

- Confluence wiki (300+ pages)

- Historical support tickets (5,000+)

- Email archive (curated)

2. Tech Stack

- Embedding: OpenAI text-embedding-3-small (cost-effective)

- Vector DB: Qdrant (self-hosted, Docker)

- LLM: Claude Sonnet 5 (excellent reasoning)

- Framework: LangChain v0.3 + FastAPI

- Frontend: Custom chat interface

3. Pipeline

Confluence API + PDFs + Support tickets

↓

Chunking (512 tokens, 10% overlap)

↓

Embedding generation (text-embedding-3-small)

↓

Qdrant vector DB

↓

Hybrid search (vector + BM25)

↓

Reranking (Cohere Rerank v3.5)

↓

LLM response generation (Claude Sonnet 5)

↓

Response + source citations4. Cost Estimate (Monthly)

- Qdrant hosting (VPS): ~$30-50/month

- OpenAI embedding API: ~$10-30/month (for 500 documents)

- Claude API: ~$50-200/month (100-500 questions per day)

- Total: ~$90-280/month

That’s a fraction of what a dedicated support team member would cost.

When NOT to Use RAG

RAG isn’t for everything. Skip it if:

- Questions are simple and static: If an FAQ page solves the problem, don’t build a RAG system

- Not enough data: For 10-20 documents, don’t build RAG - put the full text in the prompt

- Real-time data is needed: RAG stores documents, not real-time data. For that, you need API integration + agents

- 100% accuracy is required: RAG reduces hallucination but doesn’t eliminate it. Critical decisions need human approval

- Data is structured: If the data is queryable via SQL, don’t vectorize it - use a Text-to-SQL approach

Costs and Scaling

Embedding Costs

Embedding is a one-time cost (unless you frequently update documents):

- OpenAI text-embedding-3-small: ~$0.02 / 1M tokens

- OpenAI text-embedding-3-large: ~$0.13 / 1M tokens

- Open-source (self-hosted): infrastructure cost only, no per-token fees

LLM Costs (Response Generation)

This is the main ongoing cost, as every question requires an LLM call:

- Claude Sonnet 5: ~$3 / 1M input tokens, $15 / 1M output tokens

- GPT-5.2: ~$1.75 / 1M input tokens, $14 / 1M output tokens

- GPT-5 mini: ~$0.25 / 1M input tokens, $2 / 1M output tokens

- Open-source (Llama 4, Mistral Large 2): infrastructure cost only, no API fees

Scaling Tips

- Cache: Cache responses for frequently asked questions

- Smaller model: Response generation doesn’t always need the most powerful model

- Batch indexing: Don’t embed in real-time - schedule batch processing

- Chunk size optimization: Smaller chunks = more vectors = more expensive search, but more precise

Summary

RAG isn’t magic - but it’s the best method for enriching LLMs with your own data. The key is the right architecture: proper chunking, the right embedding model, a fast vector database, and the hybrid search + reranking combination.

In 2026, RAG is no longer experimental technology - it’s a production-ready solution used by the world’s largest enterprises. The question isn’t “does it work,” but “how do you integrate it into your system.”

If you’re planning a RAG-powered knowledge base or chatbot, the AppForge team can help with the full implementation - from data processing to vector database selection to production deployment.

Need an AI solution?

Automate your workflows and gain a competitive edge with our artificial intelligence solutions.

Related Articles

You might also be interested in these articles

Artificial Intelligence for Business 2026 – Complete Corporate Guide

Artificial intelligence for business in 2026: how to integrate AI, what it costs, what it returns. AI agents, chatbots, automation, EU AI Act, measurable business ROI.

AI Development Costs in 2026 – How Much Does an AI Solution Really Cost?

A comprehensive guide to artificial intelligence development pricing: chatbots, RAG systems, custom models, and process automation costs with realistic budgets and ROI examples.

LangFuse vs LangSmith: How to Monitor and Debug Your AI Applications

In-depth comparison of AI observability tools: LangFuse, LangSmith, Arize Phoenix - which one to choose and why.