n8n vs LangChain: Which Should You Choose for AI Workflow Automation?

Two Tools, Two Philosophies

If you’re planning AI-powered automation, you’ll inevitably face this question: n8n or LangChain? The answer isn’t as straightforward as you might think - because these are fundamentally different tools solving different problems. Yet they’re increasingly compared because both operate in the AI workflow space.

In this article, we’ll walk through the key differences, concrete use cases, and help you decide which one (or both) fits your project.

What Is n8n?

n8n (pronounced “n-eight-n”) is an open-source, visual workflow automation platform. Think of it as a self-hosted Zapier on steroids. The core idea: you connect services, APIs, and databases through a drag-and-drop interface - and everything is visually traceable.

n8n’s Strengths

- 400+ built-in integrations: Slack, Gmail, Google Sheets, Notion, PostgreSQL, HTTP requests - you can connect virtually anything

- Visual editor: Build complex workflows without writing code

- Self-hosting: Run it on your own server with full control over your data

- AI nodes: n8n 1.x has significantly expanded its AI capabilities - built-in LLM nodes, AI agent nodes (with sub-agent support), vector store integration, and MCP (Model Context Protocol) compatibility

- Code node: When visual nodes aren’t enough, extend with JavaScript or Python

n8n Pricing (2026)

The n8n cloud version starts at $20/month (2,500 executions/month), with the Pro plan at $50/month (10,000 executions). The self-hosted version is free as software, but real infrastructure costs range from $50-300/month depending on scale. n8n switched to execution-based billing in 2025, and by 2026 this has become the industry standard - unlimited workflows, but individual runs count.

What Is LangChain?

LangChain is a Python (and JavaScript/TypeScript) framework designed specifically for building LLM-powered applications. It’s not a no-code tool - it’s a developer library for building complex AI pipelines, agents, and RAG systems.

LangChain’s Strengths

- Deep LLM integration: Native support for all major models (GPT-5.2, Claude Opus 4.6, Gemini 3 Pro, Mistral Large 2, Llama 4, Ollama)

- LCEL (LangChain Expression Language): Declarative pipeline building where you chain components together

- Agent framework: ReAct pattern, tool use, custom agents - LangChain was the first serious agent framework

- Ecosystem: LangSmith (monitoring), LangGraph (complex agent orchestration), LangServe (deployment)

- Community: Massive developer community, extensive tutorials, and continuous development

The LangChain Ecosystem (2026)

LangChain v0.3+ has evolved significantly by 2026. With the maturation of LangGraph, the framework effectively split into two parts:

- LangChain: Core components and simple chains (RAG, summarization, basic chatbots)

- LangGraph: Complex, stateful, cyclical agent workflows with graph-based architecture

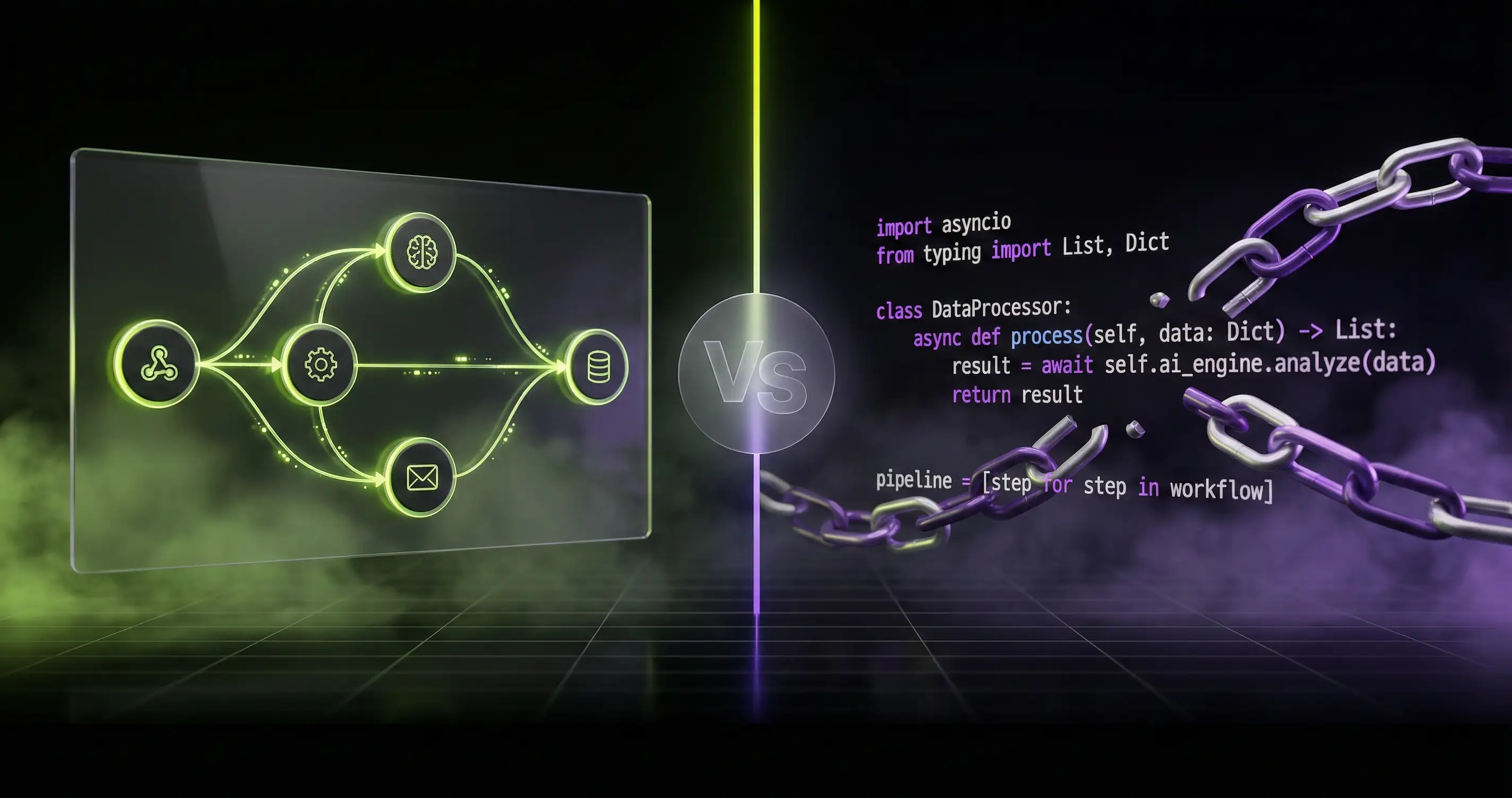

Architecture: The Core Difference

This is where the two tools truly diverge:

n8n: Visual DAG

n8n workflows are Directed Acyclic Graphs (DAG): the flow moves in one direction with no loops. Each node represents a step (API call, data transformation, conditional logic), and the flow is visually traceable. AI nodes in this system are “just” additional nodes - you plug the LLM into the workflow like any other service.

[Trigger] → [Fetch Data] → [LLM Processing] → [Send Response]LangChain/LangGraph: Programmatic Graph

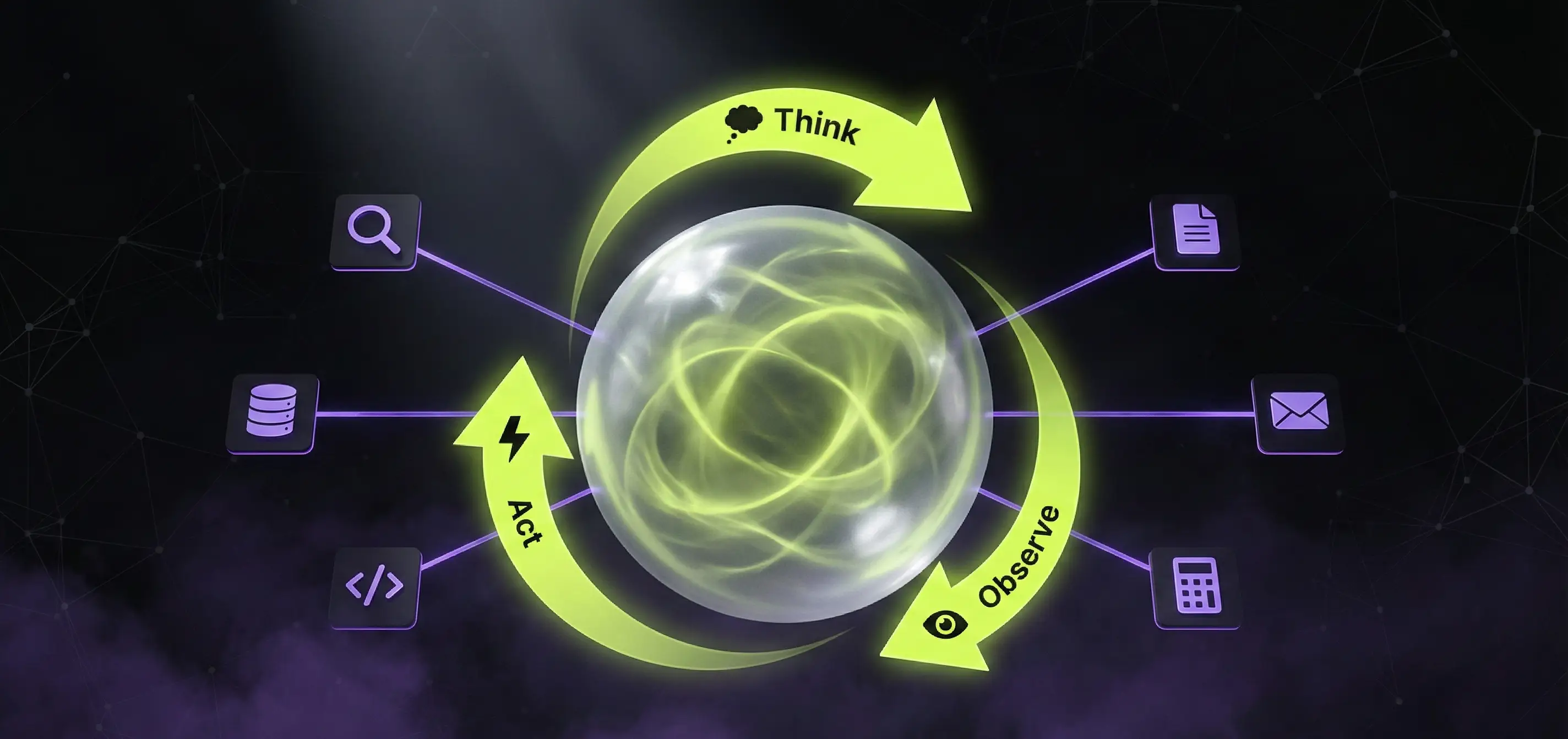

LangChain’s LCEL pipelines are also linear (DAG), but LangGraph handles cyclical graphs - the agent can return to previous steps, create decision points, and maintain state between steps.

from langgraph.graph import StateGraph

graph = StateGraph(AgentState)

graph.add_node("reason", reasoning_step)

graph.add_node("act", tool_execution)

graph.add_node("evaluate", evaluation_step)

graph.add_edge("reason", "act")

graph.add_edge("act", "evaluate")

graph.add_conditional_edges("evaluate", should_continue, {

True: "reason", # Back to reasoning

False: END

})This difference is critical: if your AI agent needs to iterate (e.g., retry multiple times, evaluate its own output, choose different paths based on decisions), LangGraph is significantly more flexible.

Practical Use Cases

1. Customer Support Pipeline

n8n approach: Webhook receives email → AI node classifies it (complaint/question/feedback) → conditional branching → automatic reply or human approval → Slack notification + CRM update.

LangChain approach: Python script that analyzes incoming messages with an LLM, queries the CRM via tool use, determines the best response through multi-step reasoning, and sends it via API.

Verdict: If customer support is part of a broader business workflow (CRM, Slack, email, spreadsheet integrations), n8n wins. If response quality is the primary concern and complex reasoning is needed, LangChain.

2. Document Processing System

n8n approach: Google Drive trigger → file download → text extraction → LLM summarization → save results to database + Slack notification.

LangChain approach: PDF loader → text chunking → embedding generation → vector database storage → RAG pipeline for retrieval.

Verdict: n8n excels at simple “process and save” tasks. But if you’re building a RAG system (vector search, semantic retrieval, multi-step evaluation), LangChain is your tool.

3. Data Enrichment

n8n approach: Webhook/schedule trigger → fetch data from API → enrich/classify with LLM → write results to spreadsheet or database.

Verdict: This is clearly n8n’s territory. The integration ecosystem and visual editor are unbeatable here - you don’t need to write a single line of code.

Learning Curve

| Aspect | n8n | LangChain |

|---|---|---|

| Target audience | Business analysts, ops, no-code developers | Python/JS developers |

| Entry barrier | Low (visual editor) | High (programming required) |

| Time to learn AI features | ~5-10 hours | ~20-40 hours |

| Documentation | Good, practical tutorials | Extensive, but rapidly changing |

| Debugging | Visual execution logs | Python debugger + LangSmith |

Self-Hosting and Deployment

n8n

- Up and running with Docker in minutes

- Database: SQLite (development) or PostgreSQL (production)

- Real self-hosting cost: $50-300/month (VPS + database)

- Cloud version: $20-50/month (managed hosting)

LangChain

- Python package -

pip install langchain - Deployment is on you: FastAPI wrapper, Docker, serverless (AWS Lambda, GCP Cloud Functions)

- LangServe: simplified deployment as REST API

- LangSmith: hosted monitoring and tracing (SaaS, separately priced)

- No out-of-the-box hosting - infrastructure know-how required

Alternatives Worth Knowing

The market has expanded significantly by 2026. Here are the most important alternatives:

Flowise

Open-source, visual LLM application builder. With its AgentFlow V2 architecture, Flowise has taken a serious step toward production use: node-based workflow orchestration, MCP integration, Document Store management, and human-in-the-loop support. If you want LangChain’s power with a visual interface, Flowise is a solid middle ground.

Dify

Also open-source LLM application platform, but with more production-ready features (tracing, hosting, prompt management). Particularly strong if you’re not locked into the OpenAI ecosystem, as it provides excellent support for all LLM providers, including Claude Sonnet 5 and Gemini 3 Pro.

LangGraph

Not really an alternative, but LangChain’s evolution. If you’re building complex, stateful AI agents, LangGraph is what you’re looking for. Now a production-grade solution in 2026 - state machine-based orchestration, human approval checkpoints, persistent memory.

When to Use Both Together

The best solution is often to not choose. A typical architecture:

- LangChain/LangGraph → Build the AI “brain”: agent logic, RAG pipeline, complex reasoning

- FastAPI wrapper → Expose it as a REST API

- n8n → Call the API from your workflow and integrate with business systems

This way, n8n handles integrations (email, CRM, Slack, webhooks), while LangChain handles the heavy AI work. This isn’t a compromise - it’s the best of both worlds.

[n8n Webhook] → [n8n: Data Preparation] → [HTTP Request: LangChain API]

↓

[n8n: Process Results] ← [LangChain: AI reasoning + tool use]

↓

[n8n: Slack + CRM + Email]Summary: Which Should You Choose?

Choose n8n if:

- You’re automating business processes and want to add AI into the mix

- You need many external integrations (CRM, email, Slack, databases)

- Your team includes non-developers

- You want quick results with a visual interface

Choose LangChain if:

- You’re building a dedicated AI application (chatbot, RAG, agent)

- You need full control over the LLM pipeline

- You’re planning complex reasoning, tool use, or multi-agent systems

- You have Python/JS developer capacity

Choose both if:

- You’re building an enterprise-level system where AI is one component

- You want to isolate AI logic from business workflows

- Scalability and maintainability matter

The bottom line: the tool doesn’t matter - the problem you’re solving does. n8n and LangChain aren’t competitors - they complement each other. The question isn’t “which is better,” but “which solves my specific problem more effectively.”

If you need help planning or implementing AI automation, the AppForge team is fluent in both tools - and can help you find the architecture that best fits your project.

Need an AI solution?

Automate your workflows and gain a competitive edge with our artificial intelligence solutions.

Related Articles

You might also be interested in these articles

Artificial Intelligence for Business 2026 – Complete Corporate Guide

Artificial intelligence for business in 2026: how to integrate AI, what it costs, what it returns. AI agents, chatbots, automation, EU AI Act, measurable business ROI.

AI Chatbot vs n8n vs Custom AI Agent 2026 – When to Use What?

AI chatbot, n8n workflow, or custom AI agent - which one fits your business? A practical 2026 comparison with pricing, capabilities, real-world examples and decision matrix.

AI Development Costs in 2026 – How Much Does an AI Solution Really Cost?

A comprehensive guide to artificial intelligence development pricing: chatbots, RAG systems, custom models, and process automation costs with realistic budgets and ROI examples.