AI Integration into Existing Systems 2026 – A Practical Guide for Businesses

How do you integrate AI into existing systems without rebuilding everything?

Integrating artificial intelligence into your existing IT infrastructure does not require a complete system rewrite. Modern AI integration works through API-based modules that connect to your current systems - whether that is an ERP, CRM, e-commerce platform, or internal knowledge base. For most businesses, AI implementation can be completed in 4–12 weeks through a middleware layer, without touching your existing codebase.

In 2026, enterprise AI integration is no longer experimental. According to the latest McKinsey research, 72% of businesses have already integrated at least one AI-powered solution into their operations. Those who have not yet started are accumulating a competitive disadvantage - but the good news is that it has never been easier or more cost-effective to begin.

The API-First Approach: Why You Do Not Need to Rebuild

In traditional software development, adding a new capability often means rewriting significant parts of the system. AI integration takes a fundamentally different approach - it connects from the outside, building an intelligent layer on top of your existing data flows.

Benefits of API-first AI integration

- Minimal risk: Your existing system remains unchanged; the AI module runs as an independent service

- Rapid prototyping: A working proof-of-concept can be built in 1–2 weeks

- Independent scalability: The AI module scales independently from your main system

- Full reversibility: If something does not work, the AI layer can be disconnected without consequences

The middleware architecture

[Existing System] ←→ [API Gateway] ←→ [AI Middleware] ←→ [LLM Provider]

↑ ↑ ↑

ERP/CRM Auth + Rate Prompt mgmt

Webshop Limiting RAG pipeline

Database Logging CachingThe API Gateway layer handles authentication, rate limiting, and logging. The AI Middleware manages prompt engineering, RAG pipelines, and response caching. This separation ensures that not a single line of your existing system changes.

Types of AI Integration: Which Fits Your Business?

Not all AI integrations are the same. The following five types cover 90% of enterprise use cases:

1. Customer service chatbot overlay

The most common entry point. An AI chatbot sits on top of your existing website or internal system, answering customer questions from your company knowledge base.

Technology: OpenAI Assistants API or Claude API + RAG system populated with your company documents.

Typical implementation time: 3–6 weeks

ROI: Automatically handles 40–60% of customer service inquiries, equivalent to 2–3 FTE savings per month.

2. RAG knowledge base integration

Your company documents (policies, manuals, FAQs, product datasheets) are indexed into a vector database and made searchable through an AI layer. Employees and customers can search your entire knowledge base using natural language questions.

Technology: LangChain + Pinecone/Weaviate/Qdrant vector database + embedding models

Typical implementation time: 4–8 weeks

ROI: 70% reduction in time spent searching for information, saving 3–5 hours per person per week.

3. Predictive analytics module

A predictive model built on your existing business data (sales figures, customer behavior, inventory levels) that provides forecasts and recommendations.

Technology: Python ML pipeline (scikit-learn, XGBoost) or LLM-based analytics (GPT-5.2 Code Interpreter)

Typical implementation time: 6–12 weeks

ROI: 15–25% cost reduction in inventory optimization, 20–35% accuracy improvement in sales forecasting.

4. Document processing AI

Automatic processing, data extraction, and system entry for invoices, contracts, and orders. Replaces manual data entry.

Technology: GPT-5.2 Vision or Claude Opus Vision API + structured output parsing

Typical implementation time: 4–8 weeks

ROI: 85–95% reduction in manual data entry time, 60% reduction in error rates.

5. Process automation with AI agents

Automation of complex, multi-step business processes where the AI agent makes autonomous decisions and coordinates multiple systems. For example: incoming order → inventory check → shipping route optimization → customer notification.

Technology: n8n/Make + AI agents (LangGraph, CrewAI) or custom AI workflow development

Typical implementation time: 8–16 weeks

ROI: Full process automation, 60–80% time savings compared to manual processes.

Technology Stack for AI Integration

LLM API comparison

| Provider | Model | Input price (1M tokens) | Output price (1M tokens) | Context | Best for |

|---|---|---|---|---|---|

| OpenAI | GPT-5.2 | $2.50 | $10.00 | 256K | General purpose, code generation |

| Anthropic | Claude Sonnet 5 | $3.00 | $15.00 | 200K | Long documents, nuanced reasoning |

| Anthropic | Claude Haiku 4.5 | $0.80 | $4.00 | 200K | Cost-effective, high volume |

| Gemini 3 Pro | $1.25 | $5.00 | 2M | Multimodal, massive context | |

| Mistral | Mistral Large 3 | $2.00 | $6.00 | 128K | European data residency, GDPR |

Orchestration frameworks

LangChain - The most mature AI application development framework. Excellent for RAG pipelines, tool use, and agents. For a detailed comparison with no-code alternatives, see our n8n vs LangChain article.

LangGraph - The LangChain team’s graph-based agent framework. Ideal for complex, multi-step workflows where agents need to manage decision trees.

n8n - A no-code/low-code automation platform with AI support. Perfect for smaller businesses and simpler integrations.

Custom API Gateway - When standard frameworks are insufficient, custom API gateway development provides full control over data flow, security, and performance.

Vector databases for RAG systems

| Database | Type | Pricing | Advantage |

|---|---|---|---|

| Pinecone | Managed | $0.08/1M vectors/mo | Zero-ops, simplest setup |

| Weaviate | Open-source / Managed | Free / Paid | Hybrid search, strong multilingual |

| Qdrant | Open-source / Managed | Free / Paid | Fast, memory-efficient |

| Chroma | Open-source | Free | Developer-friendly, simple |

| pgvector | PostgreSQL extension | Free | If you already run PostgreSQL |

AI Integration Architecture Patterns

1. Middleware Pattern

The most common and safest pattern. A dedicated middleware service mediates between your existing system and the AI provider.

[CRM] → [REST API] → [AI Middleware Service] → [OpenAI/Claude API]

↓

[Prompt Template]

[Response Parser]

[Cache Layer]

[Fallback Logic]Pros: Full control, easy monitoring, provider-independent Cons: Additional development cost, extra infrastructure

2. Sidecar Pattern

The AI module runs in the same environment as the main application but remains logically separate. Common in Kubernetes environments.

[Pod]

├── [Main Application Container]

└── [AI Sidecar Container]

├── Prompt management

├── LLM API client

└── Response cachePros: Low latency, shared resources Cons: Tighter coupling, harder to scale independently

3. API Gateway + Serverless Functions

The most modern and cost-effective approach for small-to-medium projects. AI logic runs in serverless functions, with the API Gateway routing traffic.

[Cloudflare Workers / AWS Lambda]

├── /api/chat → AI chatbot logic

├── /api/classify → Document classification

├── /api/summarize → Summary generation

└── /api/search → RAG searchPros: Pay-per-use pricing, automatic scaling, zero maintenance Cons: Cold start latency, vendor lock-in risk

Data Security and GDPR in AI Integration

Data security is one of the most critical aspects of AI integration, especially within the EU where GDPR enforces strict rules on personal data processing.

Key security considerations

Data minimization: Only send the data that is strictly necessary to the LLM. Do not send entire customer records when only the question and relevant context are needed.

PII masking: Automatically mask personally identifiable information (names, emails, phone numbers, national IDs) from prompts before sending them to the LLM API.

Data Processing Agreements (DPA): OpenAI, Anthropic, and Google all offer enterprise-level DPAs that guarantee your data is not used for model training.

Logging and auditing: Log every AI interaction - who asked, what they asked, what data the AI received, and what it answered. This is mandatory for GDPR compliance.

On-premise vs Cloud AI

| Factor | Cloud AI (API) | On-premise AI |

|---|---|---|

| Initial cost | Low (pay-as-you-go) | High (GPU server: $15K–$80K) |

| Performance | Excellent (latest models) | Limited (smaller models) |

| Data security | Secured via DPA | Full control |

| Maintenance | Zero (provider handles it) | Own DevOps team needed |

| Scalability | Automatic | Manual (more GPUs) |

| Best for | SMBs, fast start | Banking, healthcare, defense |

For most businesses, cloud API solutions are ideal. On-premise AI is only necessary when regulatory requirements (banking, healthcare) or extreme data security needs justify the investment.

Cost Estimation: How Much Does AI Integration Cost?

For a comprehensive breakdown of AI development costs, see our dedicated article. Here we focus specifically on integration costs.

Development costs

| Project type | Complexity | Average cost | Timeline |

|---|---|---|---|

| Chatbot overlay | Simple | $2,000 – $5,500 | 3–6 weeks |

| RAG knowledge base | Medium | $4,000 – $11,000 | 4–8 weeks |

| Document processing | Medium | $4,000 – $9,500 | 4–8 weeks |

| Predictive analytics | Complex | $8,000 – $22,000 | 6–12 weeks |

| Full AI workflow | High | $14,000 – $40,000 | 8–16 weeks |

Operational costs (monthly)

API costs depend on usage intensity:

| Usage level | API cost/mo | Infrastructure/mo | Total/mo |

|---|---|---|---|

| Low (500 queries/day) | $40 – $110 | $15 – $30 | $55 – $140 |

| Medium (2,000 queries/day) | $140 – $400 | $40 – $80 | $180 – $480 |

| High (10,000+ queries/day) | $550 – $1,600 | $130 – $270 | $680 – $1,870 |

Cost optimization tips

- Prompt caching: Cache responses for recurring queries - up to 70% API cost reduction

- Model tiering: Use Haiku (cheap) for simple queries, Sonnet/Opus (more expensive but more accurate) for complex ones

- Batch processing: If real-time responses are not needed, the batch API saves 50%

- Token optimization: Shorter prompts, structured output (JSON mode) usage

Measuring ROI: How to Prove AI Integration Pays Off

AI integration ROI should be measured across three dimensions:

1. Direct cost savings

- Working hours reduction: How many hours per month of manual work does the AI replace?

- FTE savings: How many employees’ time is freed up for higher-value tasks?

- Error rate reduction: How much less manual data entry error occurs?

2. Revenue increase

- Faster response time: Customer service responds faster → higher satisfaction → more returning customers

- Predictive sales: AI generates better recommendations → higher conversion rates

- New capabilities: AI-powered features (chatbot, intelligent search) create competitive advantages

3. Strategic value

- Data-driven decisions: Better business decisions based on AI analysis

- Employee satisfaction: Automating monotonous tasks improves morale

- Scalability: AI enables growth without proportional headcount increases

Average payback period: 4–8 months for most SMB AI integration projects.

3 Real-World Use Cases

Use Case 1: Customer service AI assistant

Situation: A mid-size e-commerce company (50,000 orders/month) runs a customer service team of 8 operators handling 400 inquiries daily. 65% of inquiries are routine questions (shipping status, returns, size charts).

Solution: RAG-based chatbot integration with the existing Shopify store and Freshdesk ticketing system.

Architecture:

[Shopify Store] ← Webhook → [n8n Workflow]

↓

[Freshdesk API] ← Ticket → [AI Middleware (Node.js)]

↓

[Claude Sonnet 5 API]

↓

[Qdrant Vector DB]

(product catalog, T&Cs, FAQ)Results:

- AI automatically handles 58% of routine inquiries

- Average response time dropped from 4 hours to 30 seconds

- 3 operators redirected to higher-value tasks

- Monthly savings: ~$3,200 (partial replacement of 3 FTEs)

- Investment: $7,500 development + $220/mo operations

- Payback: 2.5 months

Use Case 2: Automated document processing

Situation: An accounting firm processes 2,000 incoming invoices per month manually. Each invoice takes an average of 8 minutes (data extraction, system entry, categorization).

Solution: AI-powered invoice OCR and data extraction with automatic entry into accounting software.

Architecture:

[Email / Scanner] → [Cloudflare Workers] → [GPT-5.2 Vision API]

↓

[Structured JSON output]

(amount, tax, vendor, category)

↓

[Accounting Software API]

(automatic entry)Results:

- Processing time dropped from 8 minutes to 15 seconds (97% time savings)

- Error rate dropped from 4% to 0.8%

- Monthly savings: ~530 hours of manual work

- Investment: $4,800 development + $120/mo API cost

- Payback: 1.5 months

Use Case 3: AI sales assistant

Situation: A B2B service company’s sales team (12 people) prepares 200 proposals per week. Each proposal requires 30–60 minutes of research and writing.

Solution: AI assistant that generates personalized proposals based on CRM data, previous proposals, and the prospect company’s public data.

Architecture:

[HubSpot CRM] ← API → [AI Middleware]

↓

[Previous Proposals DB] → [RAG Pipeline] → [Claude Opus API]

↓ ↓

[Prospect Company Data] → [Web Scraping] [Personalized Proposal]

(company info, industry) (PDF generation)Results:

- Proposal preparation time dropped from 45 minutes to 10 minutes

- Conversion rate increased from 12% to 19% (AI writes more personalized proposals)

- Monthly savings: ~280 hours + ~15% revenue increase

- Investment: $12,000 development + $320/mo operations

- Payback: 3 months

Step-by-Step: How to Launch Your AI Integration

Step 1: Audit and opportunity assessment (1–2 weeks)

Before building anything, map your current processes:

- Which processes are repetitive and rule-based?

- Where is the highest manual workload?

- What data is available?

- What systems do you have, and do they have APIs?

Step 2: Proof of Concept (2–4 weeks)

Select the single most promising use case and build a working prototype. Do not try to solve everything at once. The PoC needs to prove that the AI solution works with your data and your systems.

Step 3: Pilot operation (4–6 weeks)

From the PoC, build a production-ready solution with a narrow user group. Measure performance, collect user feedback, refine prompts.

Step 4: Production rollout and scaling (ongoing)

If the pilot succeeds, roll it out across the organization. Set up monitoring, alerts, and schedule quarterly reviews.

Common Mistakes in AI Integration

Based on our experience as an AI development team, these are the most frequent pitfalls:

1. The “AI everything” syndrome

Not every task needs AI. If a simple if-else logic solves the problem, do not force an LLM into it. AI delivers real value in tasks requiring complex language processing or pattern recognition.

2. Neglecting data quality

AI output quality is directly proportional to input data quality. If your CRM data is chaotic, AI will not produce miracles. Clean your data first.

3. No fallback strategy

What happens when the OpenAI API is down? When response time exceeds 30 seconds? Always have a Plan B: fallback provider, graceful degradation, manual override capability.

4. Underestimating prompt engineering

Prompts are not “write once and done.” They require continuous iteration, A/B testing, and refinement. Prompt quality has at least as much impact on results as model selection.

5. Lack of monitoring

If you do not measure it, you cannot improve it. Track: response quality (user feedback), latency, API costs, hallucination rate, and user satisfaction.

The Future of AI Integration: What to Expect in 2026 and Beyond

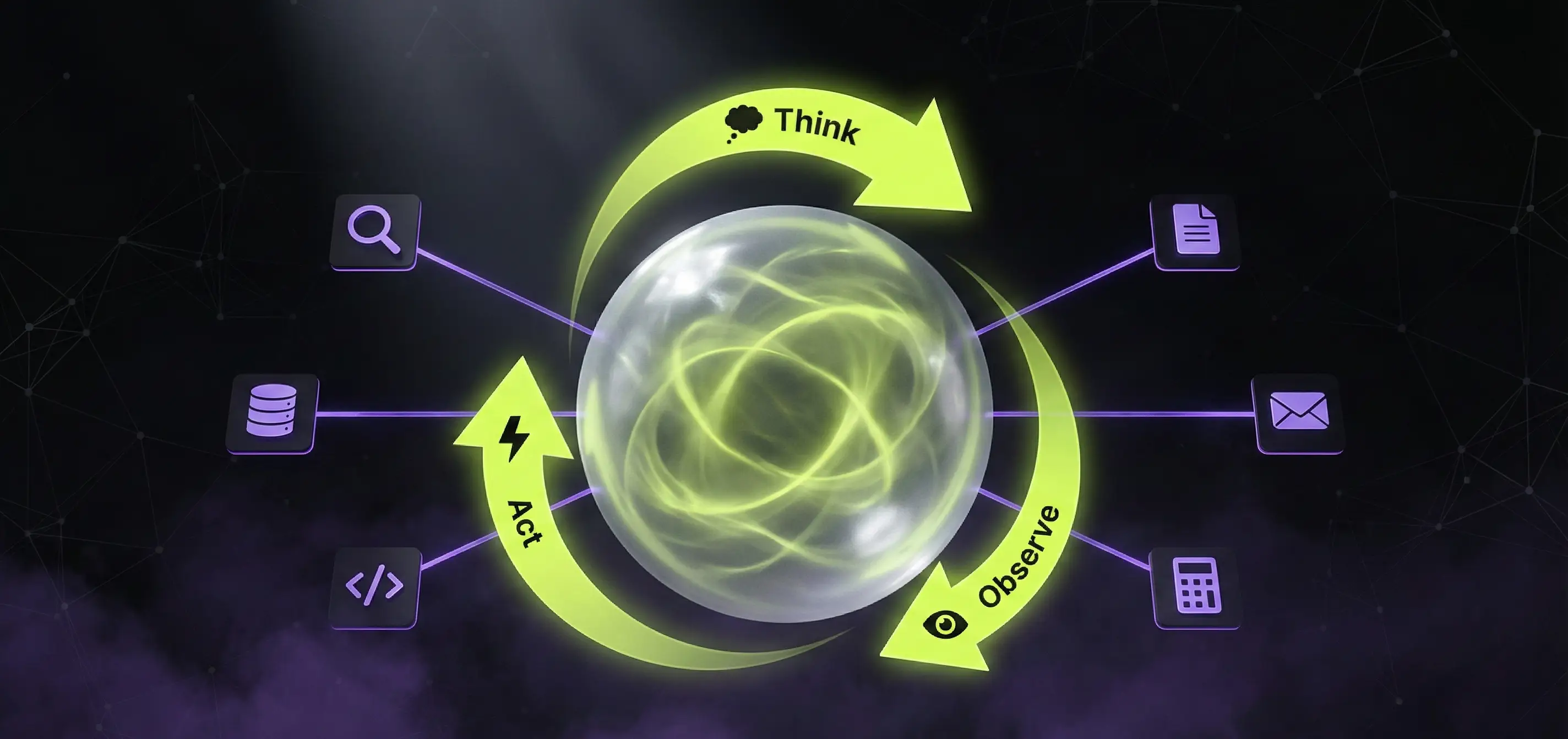

Rise of AI agents

The shift from simple prompt → response models to autonomous AI agents that solve tasks through multi-step, self-directed decision-making is accelerating. We covered the ReAct and tool-use paradigm in detail.

Multimodal integration

Beyond text, image, audio, and video processing are entering the mainstream. This will bring dramatic changes to document processing, quality control, and customer service.

Edge AI

AI models are becoming smaller and more efficient - in 2026, it is realistic for certain AI tasks to run locally in your web application or on mobile devices, without a server.

Summary and Next Steps

AI integration into existing systems in 2026 is not a luxury - it is a business necessity. The key is a gradual, API-based approach: start with a well-defined use case, measure the results, and expand gradually.

Key takeaways:

- You do not need to rebuild - API-based integration keeps your existing system unchanged

- Start small - a PoC can be built in 2–4 weeks and proves the value

- Measure ROI - most AI integrations pay for themselves within 2–6 months

- Think security first - GDPR compliance and data protection from day one

- Choose the right partner - AI integration requires both technical and business expertise

If you want to assess how AI can fit into your existing systems, get in touch for a free consultation. The AppForge AI development team will help you find the best entry point and build the optimal solution for your needs.

Need an AI solution?

Automate your workflows and gain a competitive edge with our artificial intelligence solutions.

Related Articles

You might also be interested in these articles

AI Integration in the Real World 2026 – 7 Case Studies That Show How It Actually Works

How are Duolingo, Starbucks, UPS, and European SMBs actually integrating AI in 2026? 7 case studies with measurable ROI, real implementation timelines, and the specific technologies used.

AI Chatbot vs n8n vs Custom AI Agent 2026 – When to Use What?

AI chatbot, n8n workflow, or custom AI agent - which one fits your business? A practical 2026 comparison with pricing, capabilities, real-world examples and decision matrix.

ChatGPT for Hungarian Business 2026 – A Practical Guide

ChatGPT for Hungarian business 2026: how to integrate ChatGPT (and other LLMs) into corporate workflows. Pricing, GDPR, EU AI Act, Hungarian-language quality, real examples.