Local AI Deployment 2026 – Qwen 3.6, NVIDIA DGX Spark and Sovereign AI Infrastructure

Why local AI exploded in 2026

In two years the market flipped. In 2024, almost every enterprise AI project ran on OpenAI or Anthropic APIs. By April 2026, Premai’s research shows 68% of companies running AI in production have moved to a hybrid setup - combining cloud APIs with at least one self-hosted, open-weight model.

Three things changed dramatically:

- Open-source models caught up to the frontier. Qwen3.5-9B benchmarks beat OpenAI’s GPT-OSS-120B on MMLU-Pro (82.5 vs 80.8) - at one order of magnitude smaller.

- The EU AI Act enters full force on August 2, 2026. For high-risk systems, fines go up to €35 million or 7% of global revenue - and for many companies, local deployment is the simplest compliance strategy.

- NVIDIA shipped the DGX Spark. For the first time, there’s a desktop “AI supercomputer” at $4,699 that can fine-tune a 70B-parameter model on your desk.

This article is a practical guide: real benchmark numbers, actual prices, and the cases where local deployment pays off.

What does “local AI” actually mean?

Local AI means the model runs on your infrastructure - no data goes to OpenAI or Anthropic. There are three main topologies:

| Topology | Where it runs | Typical fit |

|---|---|---|

| On-premise | Your server room / office | Healthcare, legal, banking |

| Private cloud | Dedicated cloud instances (AWS, Azure, GCP) | EU data-sovereignty-sensitive companies |

| Edge / desktop | Developer machine / DGX Spark / Mac Studio | Prototypes, small teams, R&D |

The difference: in all three cases you own the data, and you control which model runs with which prompts and what’s logged.

Qwen 3.6 - fresh release (April 2026)

Alibaba shipped Qwen3.6-Max-Preview on April 20, 2026, followed by the open-weight Qwen3.6-27B on April 22. This is the family’s third major release this year alone - a tempo that signals where Chinese open-source AI development is racing.

What does Qwen 3.6 bring vs Qwen 3.5?

- 260,000 token context window (vs Qwen 3.5’s 128k) - fit an entire codebase in one prompt

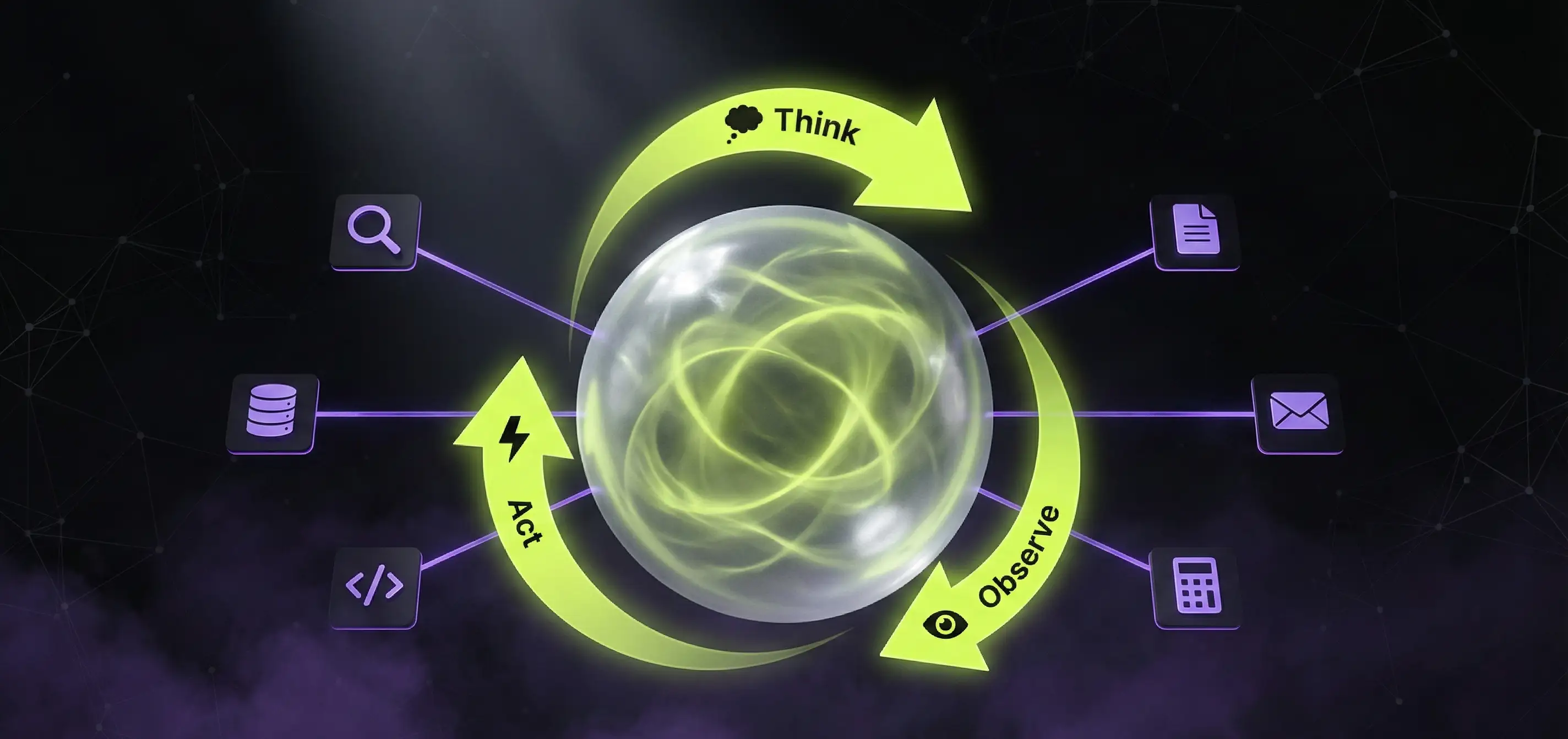

preserve_thinkingfeature - in agentic workflows, reasoning tokens persist across turns, so tool-call chains hold context- Agentic coding gains: SkillsBench +9.9 points, SciCode +10.8, Terminal-Bench 2.0 +3.8 vs 3.5

- 6 #1 rankings on leading code benchmarks (SWE-bench Pro, SciCode, SkillsBench, others)

The Qwen3.6-27B open-weight version can run on-prem today. The Qwen3.6-Max-Preview is currently API-only via Alibaba Cloud Model Studio (qwen3.6-max-preview model ID, OpenAI-compatible endpoint).

Sources: Qwen 3.6 Max Preview official blog, QwenLM/Qwen3.6 GitHub

Qwen 3.5 and 3.6 - the open-source revolution

Alibaba’s Qwen family has become the de facto standard for open-weight LLMs in European enterprise by 2026 - primarily because of the Apache 2.0 license (commercially usable without restrictions, unlike Llama’s custom license).

Qwen 3.5 / 3.6 benchmark numbers (2026 Q1-Q2)

| Model | MMLU-Pro | HumanEval (code) | RAM | Speed (RTX 4090) |

|---|---|---|---|---|

| Qwen3.5-4B | 64.1 | 71.3 | 8 GB | ~120 tok/s |

| Qwen3.5-9B | 82.5 | 78.4 | 16 GB | ~85 tok/s |

| Qwen3.5-27B | 71.2 | 85.1 | 24 GB | 55 tok/s |

| Qwen3-30B-A3B (MoE) | 79.8 | 82.0 | 20 GB | ~70 tok/s |

| Qwen3-32B-Coder | 73.9 | 88.0 | 32 GB | ~45 tok/s |

| Qwen3.6-27B (new) | 73.5 | 86.4 | 24 GB | ~50 tok/s |

Sources: Qwen Team Technical Report, Local AI Master 2026 benchmarks

What this means in practice

- Qwen3.5-9B: the sweet spot for most SMBs. A 24 GB RTX 4090 or an M3 Pro Mac can run it - and it outperforms GPT-OSS-120B on general knowledge benchmarks.

- Qwen3-32B-Coder: if you need code generation, 88% on HumanEval - better than DeepSeek V3.2 Speciale (82.6%), which needs 8× H100s.

- Qwen3-30B-A3B (Mixture of Experts): only 3B active parameters - fast latency, 30B knowledge capacity. 73-87% pass accuracy on AIME 2024 math benchmark.

Use cases our clients ask for most often

- Internal document assistant (RAG over policy + HR + technical docs)

- Customer service chatbot where data exfiltration is not an option (e.g., healthcare)

- Code autocomplete when source code is internal and can’t go to Cursor / Copilot

- Invoice / document extraction with GDPR-sensitive data

NVIDIA DGX Spark - the desktop AI supercomputer

NVIDIA shipped the DGX Spark in late 2025, and in February 2026 raised the price from $3,999 to $4,699 (citing memory supply constraints - official NVIDIA notice).

The specs

- GB10 Grace Blackwell Superchip: 5th-gen Tensor Cores, FP4 support

- CPU: 20-core Arm (10× Cortex-X925 + 10× Cortex-A725)

- Unified memory: 128 GB LPDDR5x @ 8,533 MT/s

- Memory bandwidth: 273 GB/s

- AI compute: 1 petaFLOP at FP4

- Max model size: 70B for fine-tuning, 200B for inference

Real DGX Spark benchmarks (GPT-OSS 120B, 128k context)

| Hardware | Prefill (tok/s) | Decode (tok/s) |

|---|---|---|

| DGX Spark (NVFP4) | 1,723.1 | 38.55 |

| AMD Strix Halo (MXFP4) | 339.9 | 34.13 |

| 3× RTX 3090 (MXFP4) | 1,641.9 | 124.03 |

Source: IntuitionLabs DGX Spark Review

Critical observation: the DGX Spark is top-tier in prompt processing (prefill), but slower on token generation (decode) than three used RTX 3090s ($3,500-$4,500 total). The reason: 273 GB/s LPDDR5x memory bandwidth bottlenecks decode, while a single RTX 3090 has 936 GB/s.

NVIDIA’s CES 2026 software update (TensorRT-LLM optimizations + speculative decoding) brought a 2.5× performance improvement over launch, and an 8× boost for video generation.

When does the DGX Spark make sense?

Yes:

- Prototype development is the main use case (testing many models, fine-tuning)

- NVIDIA stack (CUDA, TensorRT, NIM) integration is a must

- You need a compact, desktop form factor (1U mini-PC size in the office)

- EU AI Act compliance prevents cloud deployment

No:

- Many concurrent users in production → multiple RTX 4090 / RTX 5090 servers are cheaper

- Inference-only, no fine-tuning → 2× RTX 4090 is cheaper and faster

- You’re cost-sensitive and not locked into NVIDIA ecosystem

Alternatives in the same category

| Device | Unified memory | Bandwidth | Price (2026 Q2) |

|---|---|---|---|

| NVIDIA DGX Spark | 128 GB | 273 GB/s | $4,699 |

| Apple Mac Studio M4 Ultra | 192–512 GB | >800 GB/s | $5,999–$11,999 |

| AMD Strix Halo (Ryzen AI Max+ 395) | 128 GB | 256 GB/s | ~$2,500 |

| 2× RTX 5090 build | 64 GB GDDR7 | 1,792 GB/s | ~$5,500 |

Source: Tom’s Hardware DGX Spark Review

The Mac Studio M4 Ultra beats the Spark on raw memory bandwidth and can run larger models (up to 405B parameters with the 512 GB config). The downside: no CUDA, so a lot of ML tooling works only partially.

Hybrid strategy: when local, when cloud?

Best practice in 2026 is not “everything local” - it’s the right model for the right job.

When local Qwen / Llama / DeepSeek wins

- High-volume, repetitive tasks (e.g., document classification on 50,000/day)

- Sensitive data (PII, healthcare, legal, financial)

- Deterministic responses (same input → same output, fixed model version)

- Latency-critical (5-20ms over LAN vs 200-500ms cloud)

When cloud APIs (OpenAI / Anthropic / Google) win

- Frontier capability (Claude Opus, GPT-5-class complex reasoning)

- Bursty usage (a few thousand tokens/month - no point owning idle GPUs)

- Multi-modal (video understanding, image generation - cloud is still ahead)

- Massive context (1M+ tokens in a single call)

The break-even point - fresh 2026 data

Premai’s Q1 2026 analysis:

- 5M tokens / day average: 18-24 months payback for on-premise

- 10M tokens / day and above: 12-18 months payback

- 70B production-grade environment: $40,000–$190,000 upfront

- Hidden costs: +40-60% (operations, power, updates)

- 3-year savings: up to 50% vs cloud APIs at full utilization

Sources: Premai On-Premise LLM Deployment, SitePoint TCO Analysis 2026

European SMB context

A mid-market client of ours switched from OpenAI API to Qwen3.5-9B + RTX 4090 in January 2026:

- Before: €1,800/month OpenAI API (avg 8M tokens/day)

- After: €4,200 one-time hardware + ~€120/month power + ops

- Payback: end of month 4

- Compliance: their hospital partner finally signed off - patient data never leaves the country

Implementation stack in 2026

Inference server

- vLLM 0.7+ - the de facto standard with OpenAI-compatible API

- TensorRT-LLM - for NVIDIA, when you need maximum speed

- Ollama 0.19+ - developer machine, with MLX on M-series Macs nearly 2× speed

- llama.cpp - CPU-only or GGUF-quantized models

DGX Spark + vLLM quickstart - spark-vllm-docker

The worst Spark experience would be spending 2-3 days configuring vLLM for the CUDA 12.1a architecture. Luckily there’s a community project, eugr/spark-vllm-docker, built specifically for DGX Spark (NVIDIA GB10, sm_121a).

What it gives you:

- Prebuilt vLLM wheels from GitHub Releases, tested nightly - no source compilation required

- Multi-node Ray cluster - link two or three DGX Sparks together via InfiniBand / RoCE

- Preconfigured model recipes: Qwen 3.5-397B (yes, 397B parameters across three Sparks!), Qwen3-Coder-Next, MiniMax M2/M2.5, GLM-4.7, Nemotron, GPT-OSS-120B

- Quantization support: AWQ, INT4-AutoRound, NVFP4, FP8

- FastSafeTensors - faster model loading

- Non-privileged container - safe production deployment

Solo (single Spark) startup:

git clone https://github.com/eugr/spark-vllm-docker.git

cd spark-vllm-docker

./build-and-copy.sh

./launch-cluster.sh --soloFor a two-Spark cluster, use the -c flag and the multi-node options of launch-cluster.sh.

Note: Qwen 3.6-27B isn’t in the official recipes yet (releases are very fresh), but a few-line tweak to the 3.5 recipe gets it running.

Model management & RAG

- Qdrant or Weaviate - vector DB

- LangChain or LlamaIndex - RAG framework

- Langfuse (self-hosted!) - observability, prompt tracking (detailed comparison with Langsmith)

Security layer

- Garak or Promptfoo - prompt injection testing

- NeMo Guardrails - output filtering

- Llama Guard 3 - content moderation locally

Common gotchas - what marketing materials don’t tell you

1. Memory ≠ performance

The DGX Spark with 128 GB unified memory is slower at generation than a 24 GB RTX 4090 - when you’re running a 9B model. Big memory only helps if you actually need the capacity.

2. Quantization has a quality tax

A Q4-quantized 70B model loses 8-12% MMLU points vs Q8. Most published benchmarks use Q8/FP16 - in production you’ll likely run Q4-Q5.

3. Long context is expensive

128k context window memory grows quadratically. A 32B model at 128k context needs 60-80 GB VRAM just for attention cache.

4. Fine-tuning (LoRA) isn’t a silver bullet

LoRA fine-tuning doesn’t write knowledge into the model. It doesn’t replace RAG. If you need to answer questions about your company docs, build RAG, not LoRA.

5. The operational burden is real

An on-prem AI system needs daily oversight: GPU monitoring, model updates, security patches. Without DevOps capacity, cloud will be cheaper long-term too.

Decision matrix for European SMBs

| Company size | Use case | Recommended stack |

|---|---|---|

| 1-10 staff | Experimentation, prototypes | Ollama + Qwen3.5-9B on M3/M4 Mac |

| 10-50 staff | Internal chatbot, RAG | RTX 4090 + vLLM + Qwen3.5-27B |

| 50-200 staff | Production AI 100+ users | DGX Spark or 2× RTX 5090 + vLLM |

| 200+ staff | Enterprise + compliance | Multi-GPU node + Kubernetes + private Qwen / Llama |

Where to start

If you’re starting with local AI, don’t buy hardware first. The 5-step workflow:

- Define one concrete use case (e.g., “extract invoices with German VAT”)

- Measure token volume (how much/day?)

- Test in cloud first (1-2 weeks on OpenAI / Anthropic API → validate the concept)

- Try open-weight models in cloud (Together.ai, Fireworks, Groq → same Qwen / Llama, just not your machine)

- Only then go local, if token volume justifies it

Most companies discover at step 3 that their OpenAI bill is 3× the cost of running Qwen3.5-9B on Together.ai - without buying a server.

Request a free AI infrastructure consultation

If you want to know whether your company’s AI workload pays off locally or in the cloud, our 30-minute free consultation covers:

- Current AI spend

- Data sensitivity and compliance requirements

- Expected growth

- Recommended model + hardware stack

- Expected payback in months

Request a free consultation - or check our free SEO + AI audit for a broader digital strategy review.

Related articles

- AI integration into existing systems 2026 - technical approach

- RAG systems: intelligent knowledge base - RAG deep dive

- AI integration in the real world - case studies - 7 real ROI cases

- Langfuse vs Langsmith - self-hosted AI observability

Sources

- Qwen3 Technical Report (arxiv)

- NVIDIA DGX Spark official product page

- IntuitionLabs DGX Spark Benchmarks

- Tom’s Hardware DGX Spark Review

- Premai On-Premise LLM Cost Analysis

- SitePoint Local LLMs vs Cloud TCO 2026

- Spheron - DeepSeek V3.2 vs Llama 4 vs Qwen 3

Image generation prompts (Midjourney / Flux / DALL-E)

Use these prompts to generate the article’s illustrations. Strip this section before publishing.

Hero image (replace heroImage)

Cinematic dark studio shot of a sleek black NVIDIA DGX Spark mini-AI-supercomputer on a polished concrete desk, glowing lime-green LED accent strip, scattered server cooling fins reflection, dim purple rim light, ultra-detailed product photography, 8k, dramatic shadows, AppForge brand palette (deep black #0a0a0a, lime accent), 16:9 aspect ratio, no textIn-content image 1 - “Qwen 3.5 benchmark visualization”

Minimalist data visualization, dark background, glowing lime-green and purple bar chart comparing Qwen3.5-9B (82.5 MMLU-Pro) towering over GPT-OSS-120B (80.8) and Llama 3.3 (78.4), futuristic UI style, thin sans-serif labels, subtle grid, 16:9, AppForge dark themeIn-content image 2 - “Local vs cloud architecture diagram”

Isometric technical diagram on dark background, on-premise GPU server (left side, lime-green glow) connected to office workstations, vs floating cloud icon (right side, purple glow) with API arrows, clean line-art style, AppForge color palette, 16:9, infographic-style with minimal text labelsIn-content image 3 - “DGX Spark in real environment”

Modern European SMB office, developer reviewing terminal output on a 4K monitor, NVIDIA DGX Spark visible on desk emitting subtle lime LED glow, soft warm window light from left, slight cyberpunk aesthetic, photorealistic, 3:2 aspect ratioNeed an AI solution?

Automate your workflows and gain a competitive edge with our artificial intelligence solutions.

Related Articles

You might also be interested in these articles

ChatGPT for Hungarian Business 2026 – A Practical Guide

ChatGPT for Hungarian business 2026: how to integrate ChatGPT (and other LLMs) into corporate workflows. Pricing, GDPR, EU AI Act, Hungarian-language quality, real examples.

AI Integration in the Real World 2026 – 7 Case Studies That Show How It Actually Works

How are Duolingo, Starbucks, UPS, and European SMBs actually integrating AI in 2026? 7 case studies with measurable ROI, real implementation timelines, and the specific technologies used.

Chatbot Development Cost in 2026 – How Much Does an AI Chatbot Cost?

A comprehensive guide to chatbot development pricing in 2026. From simple FAQ bots to AI-powered customer service solutions – costs, technologies, and ROI.